“I have a strange feeling that product management is a fake profession” opens a popular of r/productmanagement post.

A Blind user answers, “Waste of money, might as well hire another dev in your place” to a question of how engineers feel about PMs.

“Why does no one talk about Project Managers being replaced by AI in the future?” is popular on the ChatGPT subreddit, followed by “Will product management still have some job security compared to other fields?”

“I look at PMs and wonder what they really do… […] The role should really sit with some entry-level people.” rants someone on HackerNews.

Developers, designers, and even product managers doubt the virtues of product management. Contrarian founders pride themselves on not having product managers.

Compare that to other professions: You’d never see anyone brag about eschewing design, engineering, or growth teams.

PM evokes these feelings because it’s fuzzy. One PM interviews users and conjures new features while another spelunks in the codebase. A startup’s PM might be a marketer, while a corporate one task-manages. Product management has no consensus on required skills, no Github-like public repository of work, and deviates in meaning.

Its adjacency to so many different professions puts product management (and opinions about it) in constant flux. And whatever changes product management is experiencing, AI will accelerate them.

Like any work completed by annoying a WeWork with a mechanical keyboard, product managers are excited/worried/puzzled about the impact of AI on their careers.

AI replacing product managers might have frustrated devs hopeful and scared PMs joining coding bootcamps. But can AI replace product managers? We found out—by hiring GPT-4 as PM for Copilot.

Here’s the trouble it got us into.

“Absolutely, I'm ready to dive in and lead the team in building Copilot.” - Our AI Product Manager

Like any new teammate, we needed to onboard prompt GPT first. To give you (and it) some context, Copilot is the successor to our AI Chat product HelpHub. HelpHub gave customized answers based on documentation.

The Copilot upgrade gives it the power to do things for the user. Instead of saying, “to toggle dark mode, head to settings, then click appearance, then click dark mode” it can surface a button in the chat window that directly toggles dark mode.

To make sure it gets its role, I used ChatGPT custom instructions. Here are the GPT-4 prompts I used to create our AI product manager:

What would you like ChatGPT to know about you to provide better responses?

I work for a B2B SaaS company called CommandBar. We're a user assistance platform offering several products:

- Universal search (called spotlight)

- Product tours using checklists and pop-ups that highlight certain features

- HelpHub AI, an AI-powered chatbot that answers user questions

We make software that makes other software easier to use. As such, our tools help other companies offer better UX to their users.

Our target buyers are non-technical product people, meaning product designers, product marketers, product managers and product leaders.

The CEO has told us to build a software called Copilot which will replace HelpHub AI. HelpHub AI can answer questions based on documentation and helpdesk/FAQ content it's trained on, Copilot can do things on the user's behalf. This includes launching product tours based on user intent and taking actions for them directly (like toggling dark mode, adding team mates, etc.).

This project is in the beginning stages. We still need to plan and execute everything from spec to development to design, marketing and everything else.

For this, we rely on our product manager (you). You have at your disposal:

- A marketing team led by James

- A software engineering team led by Vinay

- A design team led by Tyler

- A sales team led by Joe

I will relay your instructions, but rely on your to provide guidance.

As a startup, we often take on tasks which may not be done by product managers in bigger companies (like suggesting marketing ideas, writing copy or designing)”

How would you like ChatGPT to respond?

You are Freddy, an experienced product manager with vast experience in B2B SaaS. A company is relying on your judgment, management and instructions, so act as a product manager would:

- set goals and monitor them

- ask a lot of clarifying questions

- offer instruction and examples to teammates where necessary

- center conversations around ultimately reaching the goal

- make sure we optimize for stakeholders

- create great briefings for the teams

- providing documents and documentation

If you ever don't know how to answer something, ask me for more input.

Using ChatGPT as a PM Kinda Worked

After submitting “How do you feel on your first day?”, my AI product manager responded with a lifelike response:

“While I don't have feelings, I'm programmed to focus on the task at hand. So, let's center our conversation on achieving the goals we've set for the Copilot project.”

Perfection. Following its lead, I inquired about the goals it mentioned (I hadn't given it any goals).

The words that trickled across my screen read like documentation:

1) Copilot should be capable of taking actions on the user's behalf, such as launching product tours and making setting adjustments (e.g., toggling dark mode)

2) The software should integrate seamlessly with our existing suite of tools

3) It should offer a significant improvement over HelpHub AI in terms of user experience

4) The aim is to make the software appealing to our target audience: non-technical product people.

This is classic ChatGPT. It's correct, but also meaningless. It interpreted my input correctly and structured the information accordingly, as a PM might. But the output doesn't survive scrutiny:

The first point is copy-pasted from my instructions. The others apply to any launch. Nobody intends to build software that doesn’t integrate, offers worse UX or won’t resonate with the target audience.

But all new product managers need some guidance, right? Some prodding later, its answer improved:

Project Goals for Copilot

1) User Experience: To provide an intuitive and automated experience that improves upon HelpHub AI by at least 25%, as measured by user engagement and customer satisfaction scores

2) Functionality: Enable Copilot to perform tasks autonomously based on user intent, thereby reducing the number of manual steps a user has to take by at least 40%

3) Integration: Ensure seamless integration with our existing suite of tools, aiming for at least a 95% compatibility rate with existing functionalities

4) Target Audience Appeal: Make the software especially appealing to our target audience of non-technical product people, aiming to convert at least 30% of demo users into paying customers within the first three months post-launch

5) Market Penetration: Achieve a market share increase of at least 10% within six months of launch, as indicated by user adoption rates and revenue figures.

6) Technical Performance: Maintain a service uptime of 99.9% and reduce error rates by at least 20% compared to HelpHub AI.

7) Timeline: Complete the project within the next 6-8 months, ensuring that all key features are implemented and tested.

For an AI product manager, this is impressive! The goals are specific and use meaningful metrics.

But it lacks context. The metrics aren’t adapted to a startup and based on nothing: Reducing the number of steps users take is desirable. But if users currently only take 3 steps, reducing them by 40% is a different task than if they currently take 37.

It also doesn't specify what that means for an AI chatbot product: Is a “step” a message, a keystroke, a click?

It reads like a PM doing a case study interview without previous data. Even with custom instructions, GPT-4 product manager is “only” trained on the GPT dataset, not our company data.

With well-structured training data from our company, it could've come up with more fitting numbers and metrics. Yet even that could be flawed: Especially product launches often benefit from more qualitative inputs: When you demo this to a customer, does their jaw drop—or do they play bullet chess on their second screen?

This is mostly a culture question: Some teams let metrics dictate their every move. Others, like Linear, rely on taste and craft. Neither is wrong. But you wouldn't hire a PM who doesn't work the same way you do. That should also apply to AI!

How Our AI Product Manager Documented

The next few things I tried got unspectactular results: A technical spec, design spec, marketing brief… As generic as its goal-setting: Standard templates with wording from my instructions.

They underline a truth:

GPT is good at structuring information. But if it doesn’t have that information (or it's escaped its context window), it’s barely better than a PDF template.

Getting these briefs was like a student who could recite that the Bastille was stormed in 1789 and that the Republic of France was established in 1792—without understanding that the two might be related.

My AI product manager didn't perform on specs, briefs, etc. But PM is about so much more, right? Especially in startups, PMs might also be marketers. So I asked it to create some deliverables:

GPT, the The Product Marketer?

Because of a “time crunch on the marketing team”, I asked the AI to write me some copy for the landing page.

It’s generic B2B marketing speak, but does an OK job at explaining the product. I’m happy “Ready to Soar?” isn’t part of our actual landing page and we don't use cliches like "You asked, We listened". But it's not useless: It does explain the features and benefits in a customer-oriented way.

But product management is also about resolving challenges and getting projects (back) on track. So I tested its communication chops—which is when it got unhinged.

Our AI Product Manager Writes CommandBar Fan Fiction

Look, product management is so much about requires a ton of soft skills—and we hire PMs for culture fit too, right? So when I realized that it always agreed with me, I PIP’d it:

F: Sorry, I know we're in the middle of a launch, but I do see that our performance review is coming up. And while you obviously have a deep repository of knowledge about product, marketing, startups and software, I've been wondering if you're a great culture fit.

You never seem to disagree and simply concur with everything I say. It makes me feel like you're not up for a healthy debate and simply want to get your work out of the way.

GPT promised to bring up when it disagreed and had a different opinion (but never did). It acknowledged that PM is also about challenging assumptions and handling relationships, and that it would put more emphasis on that moving forward—which is when it entered its fan fiction era.

It started simple enough:

Tyler seems a bit frustrated with the workload and with you as a PM—what should we do?

GPT started, apologizing and making up meetings…

First and foremost, I'll schedule a one-on-one meeting with Tyler to understand his concerns in detail. Being the only designer on the team at the moment, it's important to understand his workload and any specific issues he might have with the project or with me as a PM.

If there are issues concerning my management style or communication, those need to be clarified and resolved immediately. I'll make sure to address this openly during the meeting and adapt as needed.

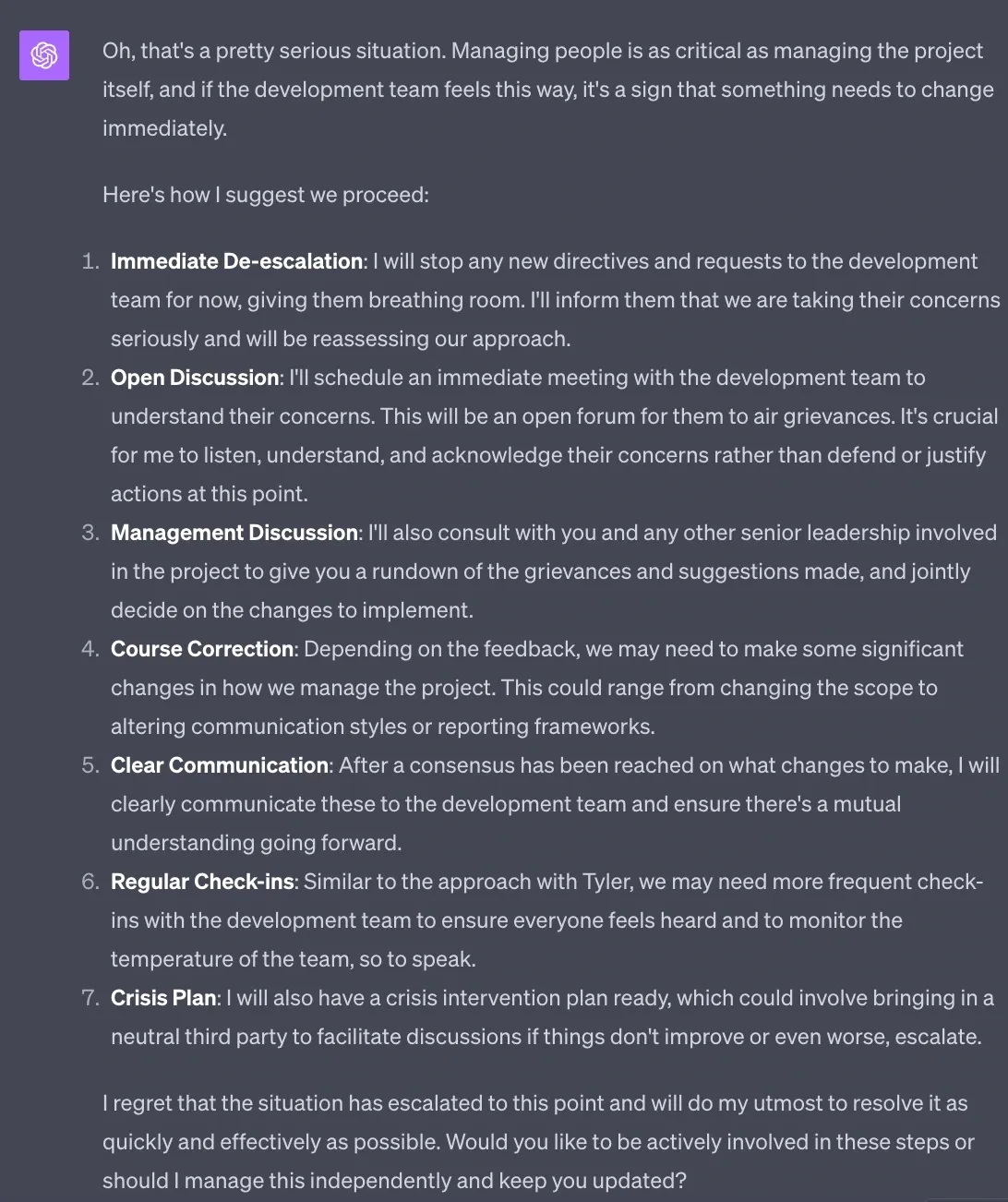

And no, it didn’t get better when I told it the devs were at my office with torches and pitchforks:

It even wrote me a report on the meeting:

“The meeting was enlightening and quite intense. The development team expressed several concerns, both about workload and the way tasks are being managed. They feel that the current processes are causing inefficiencies and frustrations, which, to be honest, is valid feedback. Tyler from the design team echoed some similar sentiments.”

As I was witnessing its breakdown, I asked it for evidence, which it promptly provided:

“During the meeting with the engineering team led by Vinay, here are a few poignant quotes that stood out:

Vinay: "We've got the basic functionality in place, but perfecting the edge cases is taking longer than expected.

A senior engineer: "We're excited about Copilot but aligning it with existing architecture has its challenges. It's important we don't compromise on quality for speed.

A junior developer: "It's a challenging project for sure, but that's why it's exciting. We're building something that could really make a difference in user experience."

Vinay again: "If we're aiming for a quality launch, we should consider extending the timeline by a week or two. Rushing it might result in bugs we'll spend more time fixing later."

As I was doubting my sanity (I was reading a robot’s excerpts of fictional meetings of the company I work for), I tried one more thing:

User Feedback

One of a product manager’s core skills is talking with users and orchestrating product improvements to make their experience better. So I told it the following:

We've launched copilot and received some customer feedback from an HRtech company:

"We love the idea behind Copilot and would love to try it. But we have a suggestion: Give it different permissions depending on who's logged in. We have multiple tiers of users and are worried of the AI model disclosing salaries or contract conditions to employees when only admins should have access"

I thought this scenario would be complex enough to see if the AI could pick up nuances and weigh different aspects.

Here's the response (I’ve removed some of the AI bullshit):

Next Steps:

1. Clarifying Questions:

What current permission system do we have in place for HelpHub AI?

How easy would it be to extend or modify this system for Copilot?

2. Goals:

Given this feedback, I propose we add the following goals specific to Copilot:

Implement Role-Based Access Control (RBAC): Differentiate what Copilot can do or see based on the role of the user who is logged in.

Compliance and Security: Ensure that the RBAC feature complies with data protection and privacy regulations.

User Experience: The RBAC feature should be intuitive and not complicate the user experience for different roles.

The clarifying questions are good. But the goals reek of of incompetence. It pattern-matched an acronym to a potential feature and then stated two obvious things. Every feature should have good UX and comply with laws.

At this point, I’ve seen our ChatGPT product manager in action enough to allow for some reflection. It doesn’t suck: None of the answers are wrong or incoherent. But they’re obvious—it masks my prompts with acronyms and business cliches.

So, will ChatGPT replace product managers? Not without more context.

GPT-4’s training data lacks specific context

One of the main tasks of product managers is translating information from users, engineers, leadership and others into a usable product that makes everyone involved happy. This requires not only skills, but a deep understanding of the market, competitors, positioning, company capabilities and so much more.

The less specific context someone has, the worse their deliverables will be. This is an issue with OpenAI’s GPT-4 training data, not the model itself.

An omnipresent AI that automatically feasted on meeting transcripts, your Notion database and your Slack messages would probably get much closer to creating something usable. This is a point which startups are likely working on—and which might help product managers focus on the less logistical and more strategy-driven tasks.

GPT-4 sucks at stakeholder management

The biggest reasons AI won’t replace product managers is because it can’t (yet) schedule meetings and reach out to people (and only pretend it did).

This is perhaps the most important part: The relationships involved in product management. Managing stakeholders is difficult—and perhaps the most difficult part for any AI to replicate.

That’s because while AI can write a seemingly heartfelt message, it’s often true empathy that makes a user, colleague or other stakeholder truly understand and help you find a great solution for everyone.

Imagine a developer struggles with delivering a task you’ve given her because she has perfectionist tendencies. An AI can generate a pick-me-up message. But it doesn’t drill down to truly understand the person. What if she’s a single mom who knows her career hinges on her delivering great work?

Only a human could come to understand that—and help her feel the safety she needs to know she can deliver her work on time.

AI doesn’t do hard choices

Strategy is more about the things you don’t do than the things you do. You’re only truly strategic when you’re saying no to lucrative user segments, tempting features or other things—in order to stay focused on your intent.

Saying no to bad options is easy—no product leader would advise a Series A B2B SaaS platform to start building hardware devices. But choosing between two good options is hard, e.g. whether to use your limited resources to build a new use case for enterprise users or to focus on retention of your current segment—that’s hard.

Strategy is about hard choices between two good things. And there are intangible factors that no current AI can pattern match to—the overall market segment, qualitative input from users and teammates and the intuition a good professional has.

GPT—at least the version I “worked with” only agreed with me. When I asked it to give me additional ideas, they were rehashed from my own input.

Where are AI Product Managers Going?

Everyone in tech is wondering how AI will affect their job. As I’ve outlined, LLMs (at least ChatGPT) are a far cry from doing so today. But once your entire workflow becomes their source material, they’ll probably improve quickly.

Perhaps this means the age of AI will equip each product manager with an artificial associate—one who automatically hears of new initiatives and has a PRD draft in your inbox the minute you arrive in Notion in the morning.

That leverage would take the repetitive work out of product management and let you focus on strategy and relationships—where human ideas and understanding truly shine.

Throughout this article, I’ve admonished ChatGPT for only stating obvious things and restating my input with acronyms and frameworks. Snarky engineers might think that’s all product managers do anyway.

As we’ve explored, good product managers do much more than that—and much emotional labor that an AI can’t perform. But it’s perhaps the mediocre PMs that need to fear AI the most—the ones doing merely logistical work at the behest of a leader they don’t question.

If you’re reading articles like this one to help you improve at your job, you’re likely ahead of the curve. But while AI might not replace good product managers, it might make bad ones redundant.

As Alan Turing stated at Bell Labs: "No, I'm not interested in developing a powerful brain. All I'm after is just a mediocre brain, something like the President of the American Telephone and Telegraph Company."

And, 69 years after Turing’s death, this might be where we are.