ProductHunt and X (Twitter) are awash with AI launches. But a lot of these launches get criticized for being “GPT wrappers”.

A “GPT wrapper” means a product where the vast majority of the magic comes from an LLM. The implication being that these are commodities — they are easy to build and lack differentiation because they all rely on the same APIs to do the jobs.

For example, let’s say we have a free Saturday and want to drop something cool on ProductHunt. “This ChatGPT thing seems really good at writing, maybe I can build an "AI-powered" writing assistant extension”. Here’s how we spend your Saturday:

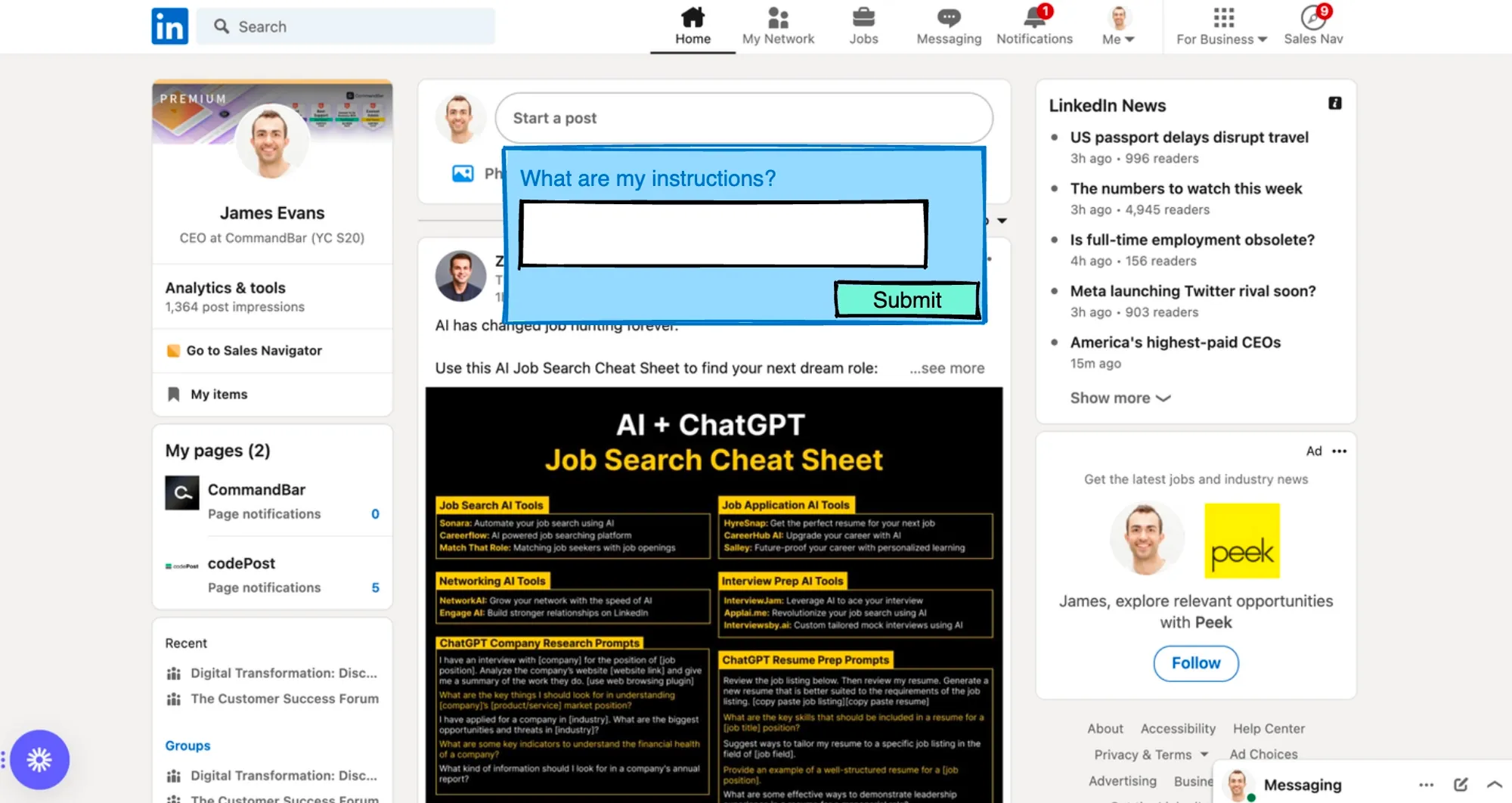

- 9am-11am: Create a basic Chrome extension that lets you summon an AI assistant using a keyboard shortcut from any webpage. [For those interested, this tutorial is very good]. The assistant looks like this. It lets me give instructions and then copies the corresponding text into the field from which I initiated it.

- 11am-12pm: Now that I have the basics of my Chrome extension, I need to figure out how it’s going to generate text. Thankfully, I live in 2023 and have access to the OpenAI API. So all I need to do is figure out how to take the user’s request and pass it to OpenAI in a way that reliably generates a good response. I could just send the user’s request straight through, but I probably want to preface it with something like: “You are an AI writing assistant. Your user has just requested your help with the following task: <insert user response>. Please produce a response that is high quality and pithy”. To figure out exactly what this prompt should be, I can play around with ChatGPT, encasing requests into several different templates until I find one that is reliably solid.

- 12pm-12:30pm: Break for lunch.

- 12:30-1pm: Wire up my extension to the OpenAI API.

- 1pm-1:30pm: Publish my Chrome extension so anyone can download it.

- 1:30pm: Head over to ProductHunt to launch my new extension. While there, I want to check out other similar launches, so I search for “writing assistant”. Oops, maybe I should have done this first. There are so many.

The product I built is a textbook “GPT wrapper”. There was nothing hard or unique about what I built. And because of that, there are lots of identical products.

What is the opposite of a GPT wrapper? A product that uses GPT that is differentiated and, therefore, likely to be a somewhat unique offering in the market. In other words, a “thick crust” around an AI core, rather than a “thin crust” that basically just repackages an AI endpoint, like in our example above.

Here are a few ways I’m seeing companies and projects differentiate GPT wrappers (some of which we use ourselves at CommandBar).

Historical data

There are several techniques for using data to make models better at a specific task, from k-shot learning to full-on fine-tuning. If you have access to some historical dataset, you can differentiate your product this way.

Human feedback

Understanding what outputs are good vs bad also lets you train your model. Make sure to include mechanisms to gather this feedback in your interface. For example, in our HelpHub product, we offer users the ability to leave feedback on every AI chat result.

Prompt engineering

This is disagreement about whether better prompts actually constitute a durable competitive advantage. My expectation is that good prompts will quickly be open-sourced. But it's still the early days of prompt engineering, and you may be able to get some alpha by investing in becoming a LangChain or Llamaindex wizard.

Brand, GTM, and Community

This is obviously a huge topic and deserves more than a paragraph. But I did want to make the point that I think too many startups/makers worry about building a differentiated product and too little about building a differentiated brand and community. There are plenty of huge businesses that started out with simple commoditized GPT wrappers and then differentiated through GTM (which lays the groundwork for product differentiation above). Jasper.ai and copy.ai are two examples that come to mind.

Is your product AI-enabled or AI-enhanced?

If you go back to that ProductHunt search for “writing assistant”, you’ll see lots of examples of writing assistants launched by companies that enable users to write without AI.

- SEO Writing Assistant by Semrush

- Notion AI

- Coda AI

- ClickUp AI

- GrammarlyGO

These are incumbents launching AI features, not upstarts getting started with an AI product. Being an incumbent brings three critical advantages:

You already have the data:

Superhuman can write emails for you better than a generic email-writing assistant because it has tons and tons of examples of how you’ve written emails in the past. Same goes with Notion or ClickUp docs.

You already have the users:

Getting feedback on AI-produced results is much more effective when you have millions of people using your product than a few dozens. So in this way, incumbents can iterate much faster than startups without existing distribution.

You offer more than AI:

Your AI doesn’t have to be great to draw more users and keep the feedback cycle going. Notion is already great without AI. I personally don’t find Notion AI to be very useful today, but I’ve tried it a few times because I love Notion, and we’ll continue using it. So Notion is going to get feedback from me and our team on their AI features, even though we wouldn’t have switched to Notion purely because of their AI features.