AI wasn’t built in a day. It took us centuries of mathematical pondering, passionate debates, and imaginative experiments to get to where we are now. Sure, there aren’t any flying cars (yet), but AI has undoubtedly crept into our day-to-day lives in recent years.

In this story, we’ll take a walk down memory lane, dodging the digital dinosaurs, to explore the origins of AI and what had to happen for us to be able to chat with machines without them asking, "Did you turn it off and on again?" But before we do, we must understand what AI is and isn’t.

Artificial Intelligence (AI) enables machines to mimic human intelligence by learning, adapting, and making data-based decisions. While for many, AI merely means robots and futuristic gadgets, AI is certainly not just that. AI also comes in the simplest of forms, like recommendation algorithms on a music app or the autocorrect feature on your smartphone keyboard.

While quintessentially modern in many of our minds, AI is rooted deep in the annals of human history. The relentless human endeavor to replicate life, or aspects of it, traces back to ancient mythology.

The first robot god

Long before we were captivated by algorithms, our ancestors were weaving tales of animated statues and autonomous beings. Greek mythology, rich and diverse, prominently features tales of mechanical beings. A standout example is Talos.

Talos, often described as the original robot, was a giant automaton made of bronze. Crafted by Hephaestus, the god of fire and metalworking, Talos was given to King Minos of Crete. This bronze sentinel had a singular mission: to guard the shores of Crete against invaders. He did so by hurling boulders at approaching enemy ships and heating himself red-hot to embrace intruders. While Talos may have lacked a USB port, his myth illuminates an early human yearning to create life or lifelike entities through artificial means.

Plenty of other exciting examples trace back to some form of AI being present in mythology. Some are stranger than others. The common theme among these is a little more philosophical. Most tales center around the powerful few having access to or creating these artificial beings to “interrogate boundaries between natural and artificial, between living and not.” Sounds familiar.

20th Century: When machines got brainy... Sort of

Dynamic computing

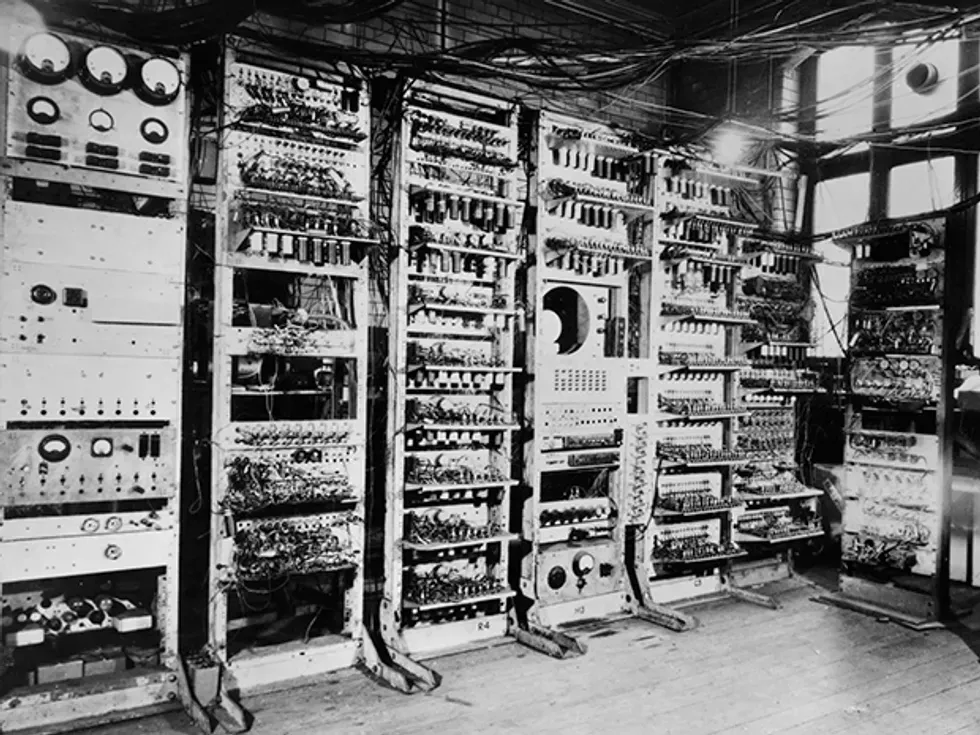

Fast forward a few thousand years, and it’s where we really start to see AI pick up the pace. The biggest challenge of AI was never a lack of theoretical expertise, as you’ll see in the next few paragraphs. It was the fact that before the 1950s, computers didn’t have the capacity to store anything. They could execute commands but not remember them, let alone learn from them.

Enter Alan Turing. When he began his work on computability, he was conceptualizing machines vastly different from today's dynamic, multitasking computers. The early theoretical machines were "stateless" in a sense.

Turing's groundbreaking idea of the Universal Turing Machine (UTM) sought to address this limitation. The concept behind the UTM was astonishingly visionary: it wasn't just a machine designed to perform a specific computation but a machine that could simulate any other Turing machine.

To achieve this, Turing introduced the idea of storing instructions (or a "program") in the machine's memory. Rather than being constrained to one function, the machine could read a program, stored as data, and then execute the instructions detailed in that program. Essentially, data (the program) could dictate the machine's behavior.

Today, when we talk about software, apps, or even firmware updates, we're essentially discussing variations of Turing's revolutionary idea.

The Turing Test

Perhaps one of Turing's most enduring legacies in the realm of AI is the "Turing Test." In his 1950 paper, "Computing Machinery and Intelligence," he posed a deceptively simple question: "Can machines think?" Rather than provide a straightforward answer, Turing proposed an experiment: if a human judge engaged in a conversation with both a human and a machine and couldn't reliably distinguish between them, then the machine could be said to "think."

AI's toddler days

As the world was slowly picking up the pieces after the devastating impact of World War II, an emerging community of academic visionaries turned their gaze toward the future.

In the summer of 1956, Dartmouth College became the epicenter of what would be a seismic shift in scientific thought. This wasn't just any summer gathering but a dedicated workshop aimed at understanding whether every feature of learning or intelligence could be so precisely described that a machine could be made to simulate it.

Over eight weeks, these pioneers—names like John McCarthy, Marvin Minsky, Nathaniel Rochester, and Claude Shannon—locked horns, exchanged ideas and ultimately shaped what we today know as the field of Artificial Intelligence.

AI's awkward teen years

A few years later, in the mid-1960s, we had ELIZA, an early natural language processing computer program. More therapist than machine, ELIZA showcased the potential of machines to interact in a surprisingly human-like manner, offering users a unique, if sometimes quirkily off-mark, conversational experience.

ELIZA was designed to mimic a Rogerian psychotherapist. The program would rephrase statements made by the user and pose them as questions, giving the illusion of understanding and empathy. For instance, if a user typed "I'm feeling sad," ELIZA might respond with "Why do you feel sad?"

As AI strutted into the 70s, it carried the lofty promises of its childhood. But, like any teenager facing the bewildering mess of puberty, AI hit some growth challenges. The once-gushing funds disappeared, plunging the industry into what's grimly termed the "AI winter." Crypto flashbacks, anyone?

By the late 1980s, winds of change began to blow. Undeterred by earlier setbacks, researchers kept refining and redefining AI's potential.

The rise from pubescence

The late 1990s saw AI put on its big bot pants. This was marked by the resurgence of "expert systems," computer programs with databases brimming with domain-specific knowledge. Rather than making your PC smarter than you, they aimed to make it as smart as the leading expert in a particular field.

Deep Blue was one of them. While Deep Blue’s development began in 1985 at Carnegie Mellon University under the name ChipTest, it wasn’t until the late 1990s that its relevance to the advancement of AI was seen. In short, Deep Blue was a supercomputer developed by IBM, specifically designed to play the ancient and highly complex game of chess at a masterful level.

In 1996, Deep Blue faced World Chess Champion, Garry Kasparov and lost. The team went back, refined its algorithms, bolstered its database, and a year later, it clinched victory in a six-game match.

21st Century: AI finds its groove

The turn of the century marked a subtle yet seismic shift for AI. No longer a mere resident of academia or the darling of niche enthusiasts, AI began its dance into the mainstream.

In the early 2000s, the emergence of "Big Data" created a foundation for AI's accelerated growth. With vast amounts of information at its disposal, AI transitioned from basic pattern recognition tasks to more advanced predictive and analytical functions.

2011 brought us IBM's Watson. Demonstrating unmatched prowess in natural language processing, Watson's "Jeopardy!" victory wasn't just a cool party trick. It signaled AI's potential to engage with human language, even if it wasn’t great at small talk.

Subsequent advancements in image recognition were also remarkable. For instance, the development of AlexNet in 2012 showcased how AI could interpret and analyze visual data with a degree of accuracy previously thought unattainable.

Around the mid-2010s, AI wasn't just for the tech nerds anymore. Suddenly, Siri was finishing our sentences, Alexa played DJ at our house parties, and Google Assistant became our go-to for those tricky trivia questions. These AIs were revolutionizing the way we chatted with our gadgets, making tech feel less like a machine and more like a mate.

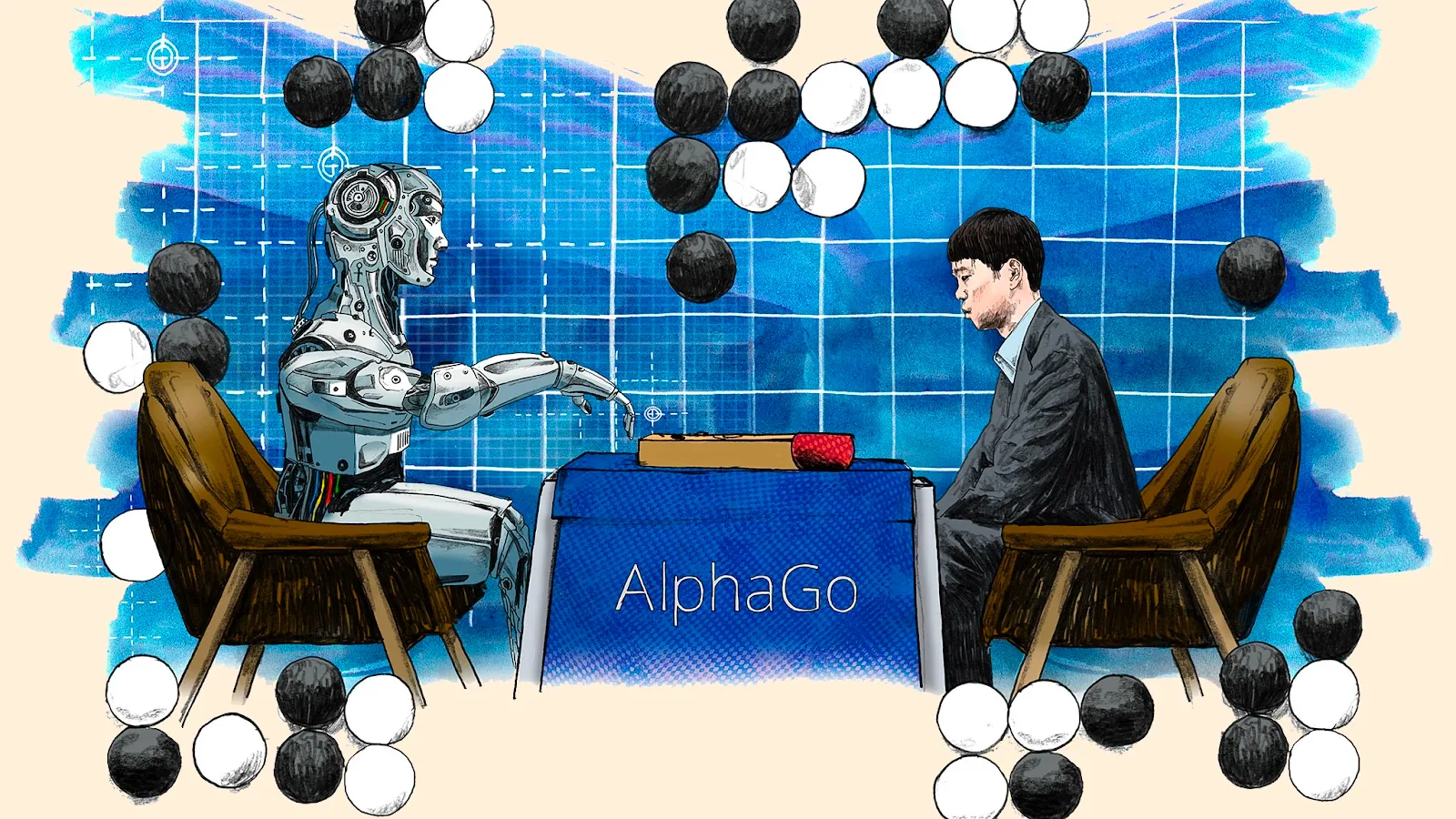

In 2016, the AI community witnessed another breakthrough with Google DeepMind's AlphaGo defeating a world champion in the game of Go. Given the game's complexity and the depth of strategy involved, this victory emphasized AI's capability to handle intricate and nuanced tasks.

That brings us to now. Generative AI took the stage for the better part of the past two years, with tools like Midjourney and ChatGPT having some worry for their jobs. As far as what’s next, it’s hard to say. As dystopian as it may seem, AGI doesn’t seem as far off as it once did. Back in the 2010s, the common belief was roughly a 50-year timeline for singularity to be achieved. With the emergence of Large Language Models (LLMs), AI expert Hinton revised his estimate, suggesting it might be just 20 years away, if not sooner.

Until then, the need to address its ethical implications becomes more urgent. We're charting territory where machines intersect with human values. It's thrilling to witness such innovation, but we must ensure that its growth is guided by principles that benefit society at large.

A word to the wise: Be nice to your AI.